TL;DR

- Enterprises face 3× more AI workloads than available GPUs heading into 2026

- Traditional schedulers fail heterogeneous workloads (training + inference + RAG + analytics)

- Software like Kubex can unlock significant GPU capacity gains using MIG + dynamic fragmentationPower—not GPUs—will be the binding constraint by 2027. The GPU Supply Crisis (2026 Reality Check)

By 2026, the GPU shortage isn’t a supply-chain hiccup anymore. It’s baked into the system.

Even after pouring billions into CapEx, most enterprises still want 40% more GPU capacity than they actually have. And it’s not because they’re chasing moonshots. Technology companies are training foundation models while serving inference for millions of users on the same clusters. AI labs are juggling fine-tuning, evaluation, and real-time experimentation side by side. Retail and consumer platforms are running forecasting, recommendation, and personalization pipelines that never really shut off. Media and gaming teams are mixing rendering, simulation, and generative workloads with live inference.

All of these workloads look different, behave differently, and stress GPUs in completely different ways yet they’re increasingly forced to coexist on shared infrastructure.

The twist is that this is all happening inside power-constrained data centers. More than 30% of large enterprises have already hit hard power caps, meaning even when budget is approved, there’s nowhere left to deploy new GPUs.

And that’s where the uncomfortable truth sets in:

The real problem isn’t GPU availability. It’s how GPUs are being used.

Most platforms still schedule GPUs as if every job needs a full device. That “one-GPU-per-workload” mindset made sense once. Today, it quietly wastes capacity, inflates cost, and slows teams down right when velocity matters most.

Why Current Solutions Fail Heterogeneous Workloads

Most enterprise teams already feel that something’s off. The GPUs are expensive, queues keep growing, and somehow utilization still looks terrible. That’s because the tools we’re using weren’t built for the kind of AI workloads we’re running today.

Take Kubernetes GPU scheduling. It does what it was designed to do schedule at the node level but it has no real understanding of how AI workloads behave. It doesn’t know model memory profiles, can’t reason about MIG slice compatibility, and has zero awareness of power draw per workload. So GPUs show up as “allocated,” while in reality more than half the capacity is quietly going unused.

Then there’s static GPU partitioning. Teams carve out GPUs for training or inference and lock them in. Training jobs finish early and those GPUs just sit there. Meanwhile, inference queues back up even though capacity technically exists. In mixed environments, this alone wastes 40–60% of available GPU cycles.

Cloud providers try to paper over the problem by throwing more GPUs at it. That works until the bill shows up. Overprovisioning is expensive, visibility across data centers is limited, and there’s no real control over power economics. You pay more, but you’re not actually more efficient.

A lot of enterprises attempt to solve this with homegrown schedulers. They work at small scale, but start falling apart fast. They can’t dynamically slice or re-slice GPUs, don’t understand what’s happening across clusters or data centers, and have no insight into memory pressure or power constraints. Once workloads scale or diversify, the system breaks.

The real issue is this: modern AI environments aren’t homogeneous anymore. You’re running training, inference, RAG, and analytics side by side and that requires dynamic GPU slicing in real time, guided by workload needs and economic signals, not static rules.

That’s the gap every enterprise is running into right now and as with any emerging costly business problem, software targeting it emerges. Kubex is a software vendor I have been involved with and advising around GPU trends and market dynamics.

Kubex’s GPU Optimization Approach

Dynamic xPU Allocation (NVIDIA, AMD, Google-TPU)

Kubex isn’t tied to one chip vendor. It’s built to manage heterogeneous xPUs: NVIDIA GPUs (MIG), AMD GPUs, and Google TPUs using the same resource allocation engine.

For NVIDIA, Kubex leverages time-slicing, MPS, and MIG to , apply dynamic partitioning and workload-aware slicing based on memory, compute, and power characteristics. Soon, it will extend its coverage to AMD and TPUs.

What that means in practice:

One high-end accelerator can flex between:

- A full device for Llama3 training

- Multiple inference slices during peak traffic

- Mixed partitions for RAG and analytics

Kubex does this automatically:

- Matches the right slice profile to each workload

- Intelligently schedules new jobs to optimize node loading in real time as jobs finish or queues spike

- Optimizes for business priority, not FIFO scheduling

No restarts. No manual tuning. No vendor lock-in. xPUs doing useful work continuously.

Heterogeneous Workload Optimization

Kubex understands workload intent, not just resource requests.

- Training workloads get full GPUs when needed, then gracefully fragment post-epoch

- Inference pipelines receive low-latency slices with guaranteed memory headroom

- RAG workloads get memory-optimized profiles

- Analytics jobs burst during off-peak training windows

Power & Memory Tracking (2026 Must-Have)

One of the things that drew me to Kubex was that it doesn’t just schedule GPUs, it governs them.

- Power draw tracked per slice, per model · Memory pressure alerts before OOM failures

- Model-to-GPU right-sizing (stop running small models on power-hungry GPUs)

This is no longer optional. It’s table stakes.

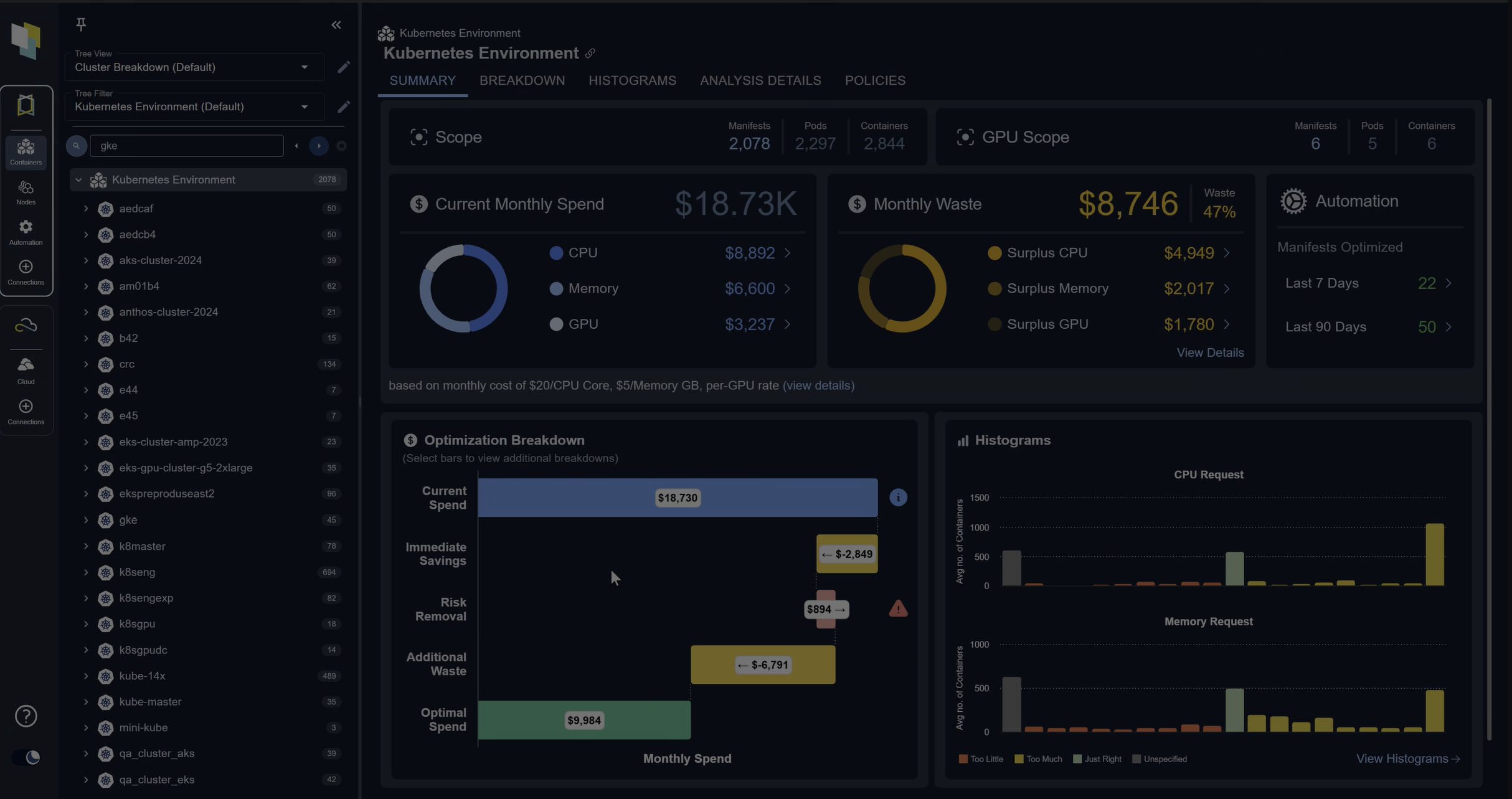

Kubex’s Multi-Scale Visibility (A unified view that many teams need today)

Kubex gives operators a single pane of truth across all GPU resources.

Scale IN — Per GPU

- Slice-level utilization (memory, compute, power)

- MIG profile efficiency

Scale OUT — Per Cluster

- Optimal workload mixing recommendations

- Training vs inference balancing

- Burst windows for analytics

Example: Power efficiency at 2.1 TFLOPS/watt

Future-Proofing for a Power-Constrained World

By 2027, power not GPUs becomes the real currency.

- New data centers capped at ~100MW

- Cooling limits tightening

- Regulators scrutinizing energy efficiency

Kubex enables:

- Power-per-model accountability

- Killing power-inefficient “zombie models”

- Prioritizing flops-per-watt, not flops alone

This is how enterprises scale AI without violating physical reality.

What You Achieve Without Manual Effort

- Unlock significant GPU capacity gains from what you already own

- 40% lower cloud GPU spend by eliminating overprovisioning

- Clear signal on which models to scale—and which to shut down

- Built-in power compliance for regulated environments

- One view of all GPU economics across all data centers

Why This Is Urgent

If training and inference share your clusters, you’re almost certainly wasting 50%+ of your GPU capacity and burning power you can’t recover.

Kubex helps your existing GPUs behave like real capital assets.

Another reason I like advising Kubex is because the software is very accessible and easy to get running, which lets you see the value for yourself”

Connect with me on Medium.