Resource optimization across GKE Standard, Autopilot, and Anthos.

Agent-driven optimization of pods, storage, autoscalers, and node fleets across GKE Standard, Autopilot, and Anthos — landing as node-pool definitions, workload patches, or Terraform diffs.

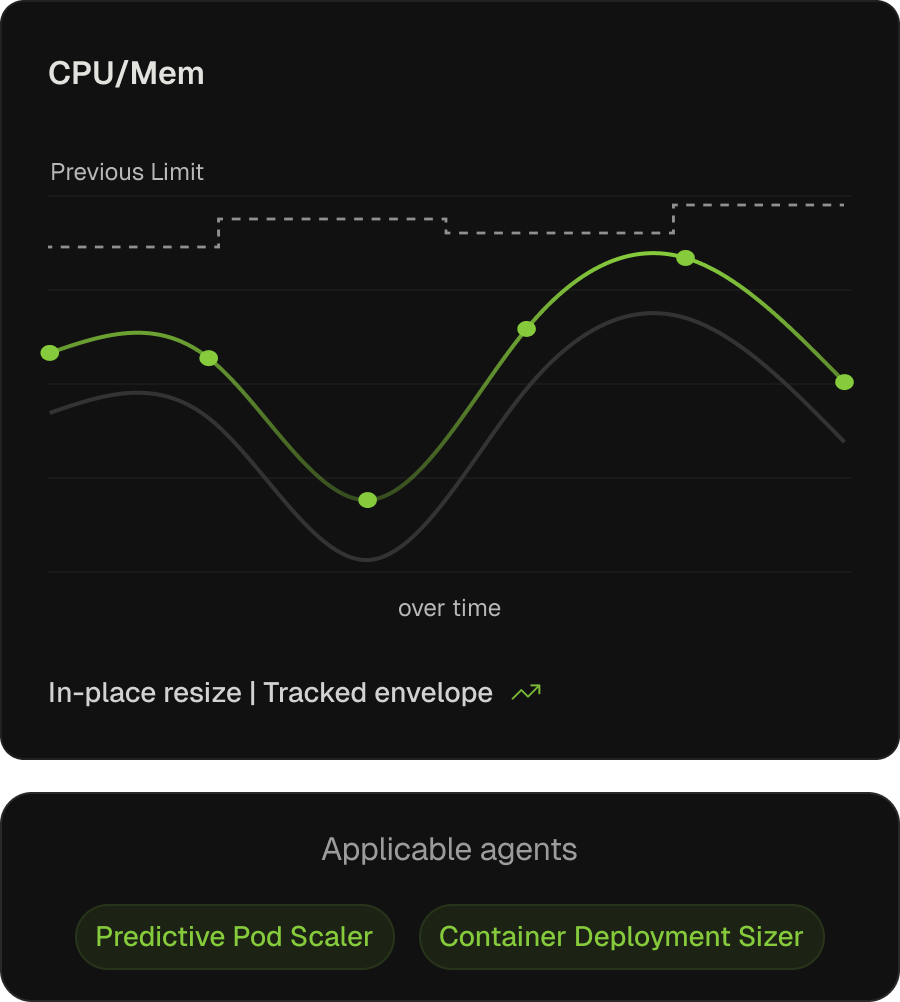

Request and limit specifications, kept in sync with workload reality.

On GKE, the workload right-sizing recommender produces VPA-driven guidance — and on Autopilot the lever is more direct, since requests and limits are billed regardless of node fill. Neither closes the loop. Kubex applies them continuously via mutating webhooks and in-place resize, leaving GKE-managed namespaces untouched.

Continuous request right-sizing

Tuned from learned utilization, freeing capacity the cluster autoscaler holds in reserve.

Limits prevent OOM and throttling

Shaped to actual peak behaviour, not template defaults.

Predictive scaling and new-workload sizing

Predictive Pod Scaler resizes ahead of learned patterns; Container Deployment Sizer drafts new specs via MCP.

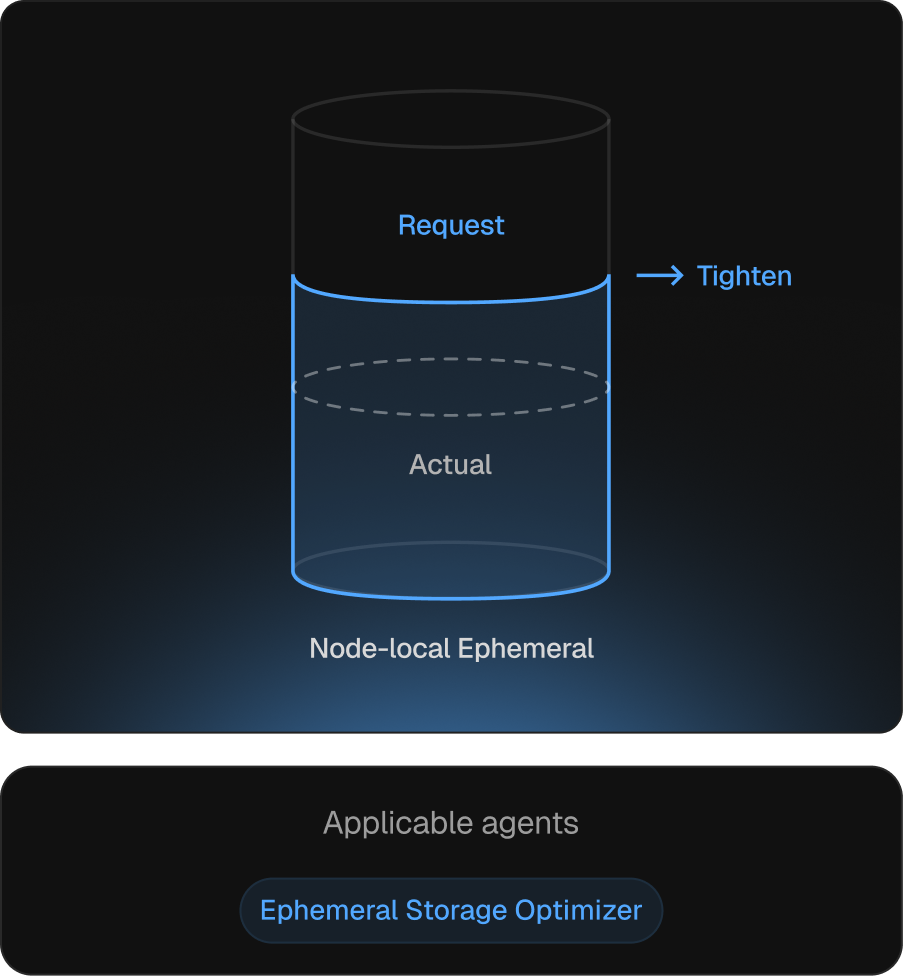

Local storage requests aligned to actual disk pressure.

Ephemeral storage is the resource discovered during incidents. On GKE, under-spec’d ephemeral-storage triggers disk-pressure evictions; over-spec’d caps pod density. Kubex tracks usage and adjusts requests via the same in-place path as CPU and memory.

Pressure-driven scheduling stays accurate

Requests reflect real disk consumption, not worst-case guesses.

Capacity restoration

Right-sized requests release headroom held against worst-case usage.

Disk-pressure evictions eliminated upstream

Requests track growth, so disk-pressure conditions never form.

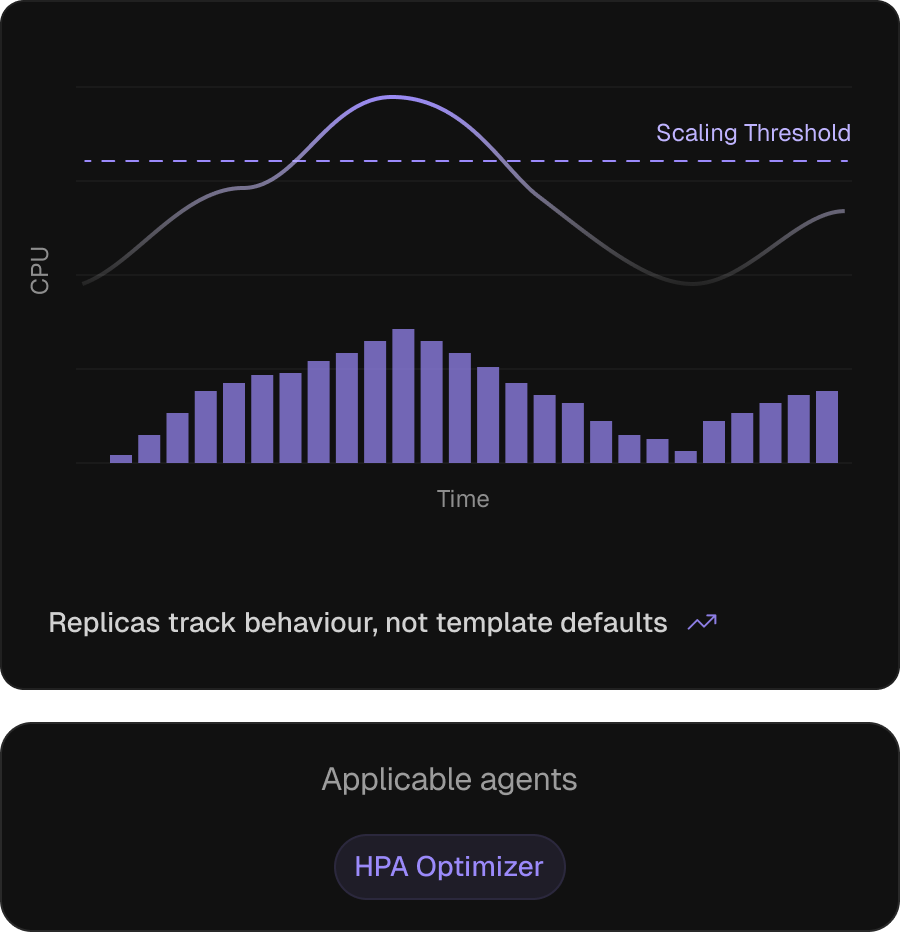

Horizontal autoscaling, configured from how the workload behaves.

On GKE, HPA — paired with KEDA, custom metrics, or Pub/Sub triggers — carries elasticity for most workloads, but keeping it correct is hard — thresholds inherit from templates, policies stay default, HPAs outlive their pod sizing. The HPA Optimizer recomputes thresholds, scale policies, and replica bounds against today’s workload.

Thresholds re-anchored after pod sizing

Recomputed when right-sizing shifts the request denominator.

Scale policies tuned to reaction time

Against observed behaviour, not Helm-chart defaults.

OOM and throttling shielded

Flags HPA settings that let pods hit throttling or OOM before scale-out.

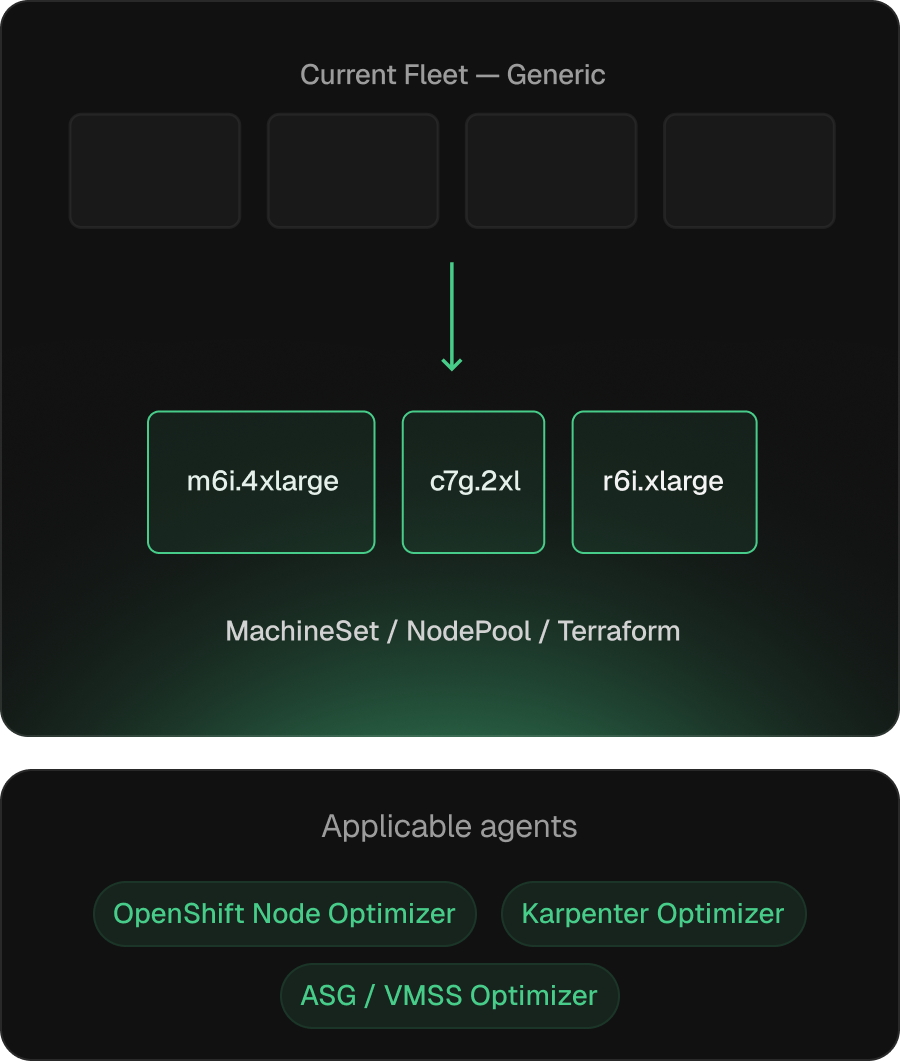

Node fleets that match the workload they actually run.

GKE node-pool composition drifts from workload reality once pod sizing changes land. Kubex addresses this in three modes: machine-type and MIG-parameter recommendations on GKE Standard (via Terraform, gcloud, or GitOps), request/limit tuning on Autopilot since billing tracks the spec directly, and node-pool-style recommendations against ASG-backed compute under Anthos cross-cloud.

Output is the artifact, not a recommendation

GKE node-pool definitions, Autopilot workload patches, or Terraform diffs through existing change-management.

Aware of GKE Standard, Autopilot, and Anthos cross-cloud

Each cluster type gets the right surface — node-pool specs, request/limit tuning, or ASG-backed recommendations.

Continuous re-evaluation as pod sizing evolves

Recompute as pod requests change.

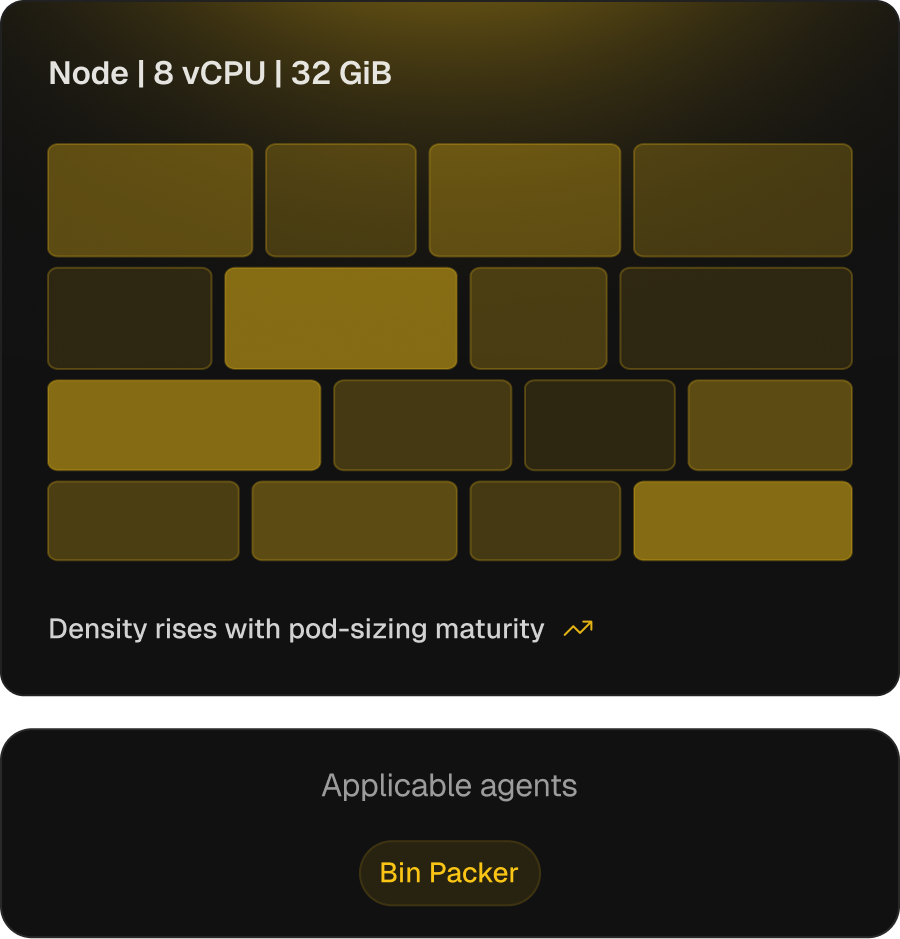

Higher pod density, with safety bounds that keep it usable.

On GKE Standard, the cluster autoscaler and the scheduler’s bin-packing strategies (MostAllocated, RequestedToCapacityRatio) under-pack by default. Tuning them before right-sizing pods is the failure mode — overstacking, throttling, OOM. The Bin Packer ties density to pod-sizing maturity, raising it as sizing stabilises.

Max-pods and strategy per node type

Aligned to actual pod profile — MostAllocated / RequestedToCapacityRatio.

Consolidation thresholds move with pod-sizing maturity

GKE cluster-autoscaler optimization profiles and node-pool autoscaling auto-tuned to pod-sizing accuracy. On Autopilot, request/limit tuning replaces consolidation entirely.

Per-pool profiles, including Autopilot

Standard, Spot, and GPU pools each get their own consolidation profile; Autopilot workloads get request/limit profiles, since billing tracks the spec.

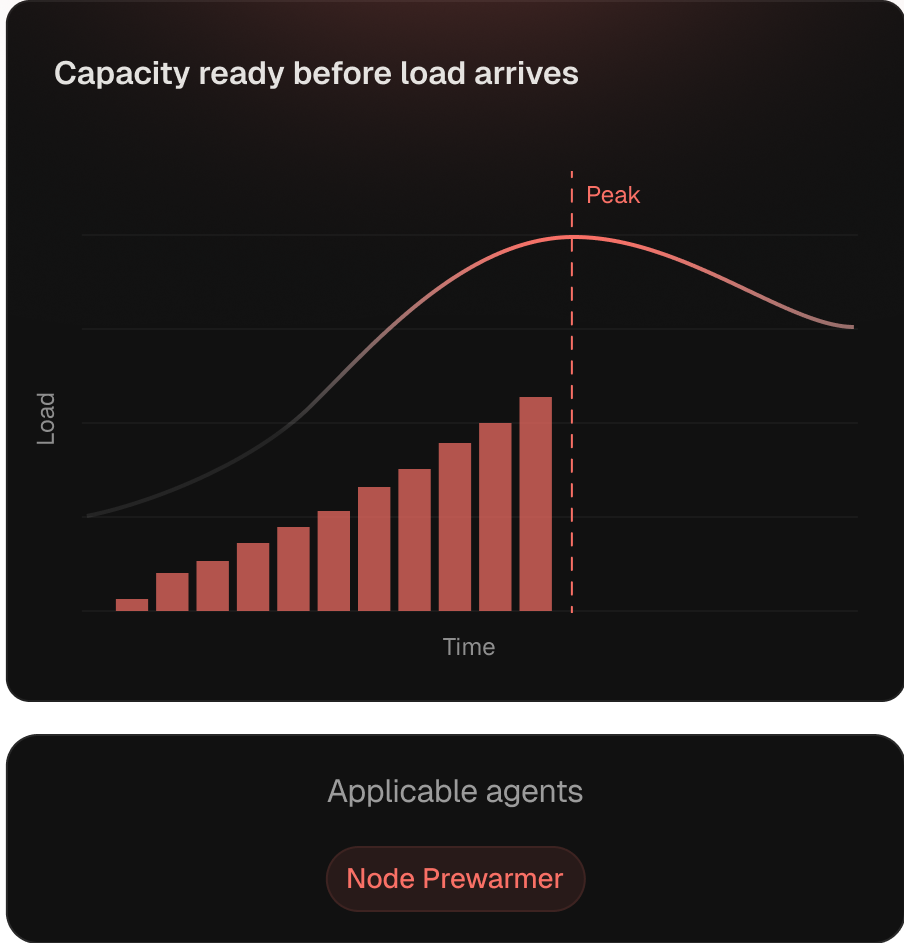

Capacity initialized before the load curve hits.

Reactive autoscaling adds nodes after pressure arrives — paid every day on daily-cyclical workloads. The Node Prewarmer provisions ahead of forecast from Kubex’s pattern models. Leverage peaks on GPU inference — CUDA pulls and model load dominate cold starts.

Predictive scheduling against learned patterns

Runs ahead of the daily load cycle, not after pressure.

GPU-aware pre-warming

CUDA pulls and model load accounted for, so inference SLOs aren't paid in warm-up.

Coordinated with bin packing

Pre-warm respects consolidation thresholds, so headroom doesn't fight stable-load density.

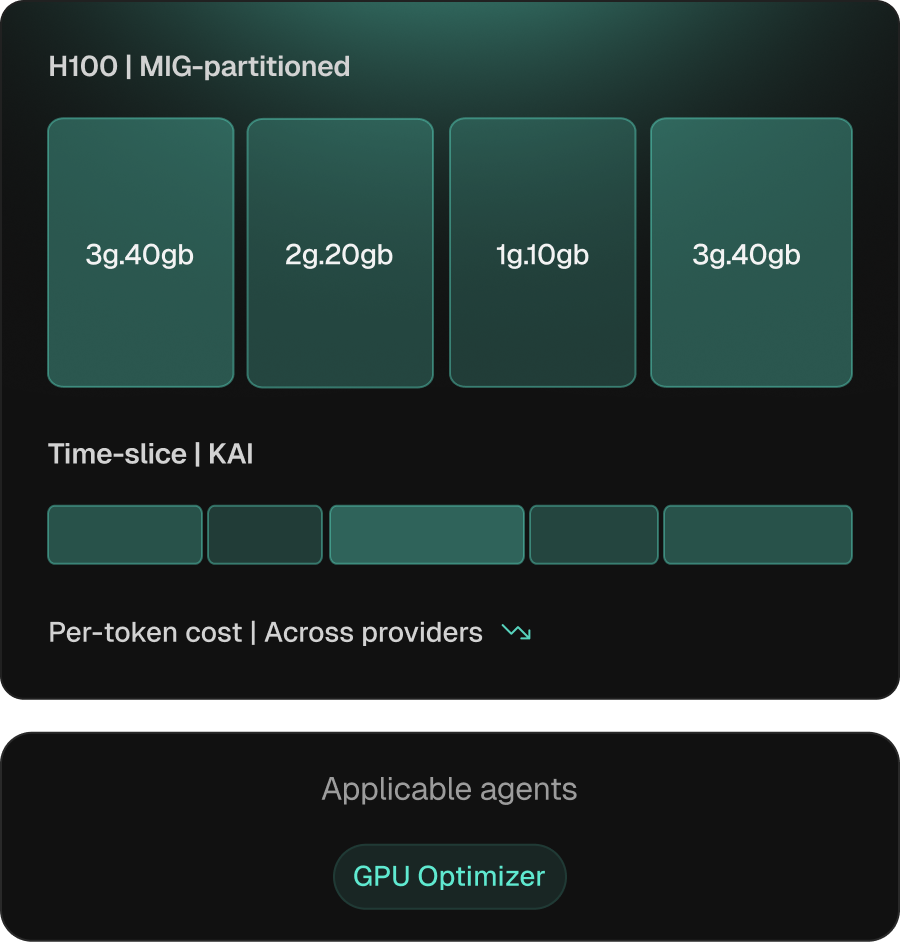

Inference and training, sized to the right GPU.

GPU workloads bring decisions CPU tooling doesn’t make — sharing strategy, partitioning, and SKU. On GKE Kubex covers all of it: time-slicing via NVIDIA KAI, MIG on Ampere/Hopper/Blackwell, SKU selection, and cross-provider analysis across GKE GPU and TPU SKUs (A2, A3, G2, TPU v5e/v5p), neoclouds, and adjacent CSPs.

Per-workload sharing strategy

MIG, time-slicing, or MPS — by isolation, flexibility, or memory profile.

SKU selection includes provider economics

Factor in benchmarks, availability, and pricing — not the local default.

Cross-provider price/performance

Workloads evaluated across CSPs, neoclouds, and on-prem — comparison, not auto-move.

See how Kubex looks against your GKE cluster.

A walk-through of the agent surface and change-management flow — on a cluster you actually run.